Explore Bishop Fox's experimental research into applying Large Language Models to vulnerability research and patch diffing workflows. This technical guide presents methodology, data, and insights from structured experiments testing LLM capabilities across high-impact CVEs, offering a transparent look at where AI shows promise and where challenges remain.

SSO Phishing, Patching Failures, Exposed APIs

In this Initial Access podcast episode, we cover SSO phishing, patching failures, exposed APIs, and zombie infrastructure remind us that basic security hygiene still decides the outcome.

Fueling Security: How a Fortune 500 Utility Stays Ahead of Emerging Threats

A Fortune 500 energy provider faces constant threats from nation-state actors targeting critical infrastructure. Partnering with Bishop Fox for Attack Surface Management and red team assessments, the company gained continuous visibility into their external perimeter...

Deepfakes, Spyware Kits, and LLMs for Hire

In this Initial Access podcast episode, we cover prompt injection, a hijacked Outlook add-in, commoditized mobile spyware, AI executive deepfake scams, IT-to-OT pivoting, and nation-state use of commercial LLMs to accelerate exploitation.

Building Tools: What, When, and How

Surrounded by security tools but still tempted to “just build it”? This hands-on workshop breaks down when custom tooling is worth it, when it’s not, and how to build fast, focused tools without overengineering.

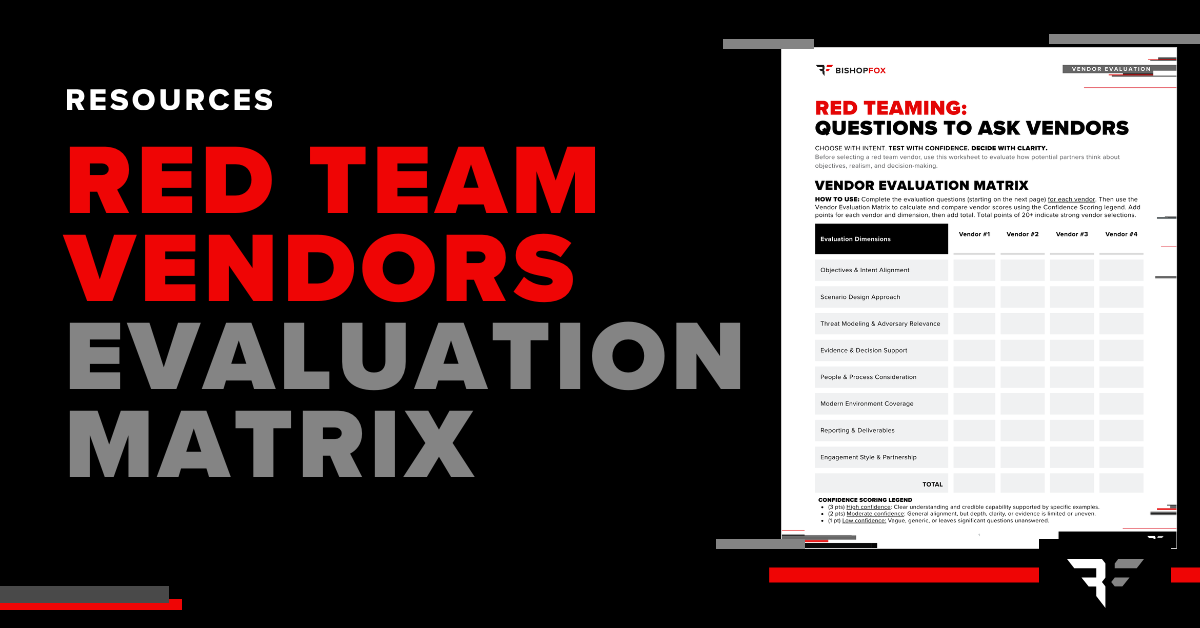

Red Team Vendor Evaluation Worksheet

The Red Team Vendor Evaluation Matrix Worksheet is designed to help security leaders evaluate red team vendors thoughtfully before engagement using a structured, question-driven approach.

Software Policy Rollbacks, Insider Access Abuse, and AI Automation Risk

In this Initial Access podcast episode, we cover the rollback of federal software security guidance, insider-driven access risks, ongoing state-sponsored espionage, and the security implications of giving AI tools deep control over infrastructure.

Application Portfolio Penetration Testing Solution Brief

Download our solution brief. Learn how to secure entire application portfolios with attacker-realistic testing and expert-validated, trusted results.

AI & Security Risks: A Cyber Leadership Panel

Watch a fireside chat with cybersecurity and AI leaders on today’s real AI security risks. Learn where risk is emerging, how leaders set ownership, the true cost of securing AI, and practical steps teams use to protect AI systems and data.

Prompt Injection, Session Hijacking, and Why AI Isn't Writing the Attack Plans Yet

In this Initial Access podcast episode, we cover AI prompt injection risks, continued social engineering via LinkedIn and QR codes, credential theft and session hijacking, patch reliability and appliance security, and how AI is being used to accelerate malware development—distinguishing meaningful risk from overhyped claims.

Application Security: Getting More Out of Your Pen Tests

Application pen tests cost real time and money. Learn how to get real value from them. Bishop Fox lead researcher Dan Petro explains what good app tests include, how to evaluate AI-powered testing, and the questions that matter before and after an engagement.

Fortifying Your Applications: A Guide to Penetration Testing

Download this guide to explore key aspects of application penetration testing, questions to ask along the way, how to evaluate vendors, and our top recommendations to make the most of your pen test based on almost two decades of experience and thousands of engagements.

Sliver Workshop Part 3: Building Better Encoders

In our third Sliver workshop, we explore how Sliver handles traffic encoding by default and how attackers can extend its capabilities with custom Wasm-based encoders. We dive into Sliver’s encoder framework works, what’s possible with WebAssembly, and how to design and test your own encoders.

This site uses cookies to provide you with a great user experience. By continuing to use our website, you consent to the use of cookies. To find out more about the cookies we use, please see our Privacy Policy.